Swarm Intelligence: The Battle to Control a Thousand Simple Minds

The problem isn't a lack of intelligence—it's a fundamental flaw in how we define control

What if true intelligence isn’t about a single genius, but about a thousand idiots following simple rules?

I have pondered this question for over a decade. It’s the secret heart of swarm intelligence, one of the most fascinating and counter-intuitive fields in all of robotics.

The Magic of Swarm Intelligence: Simple Rules, Complex Behavior

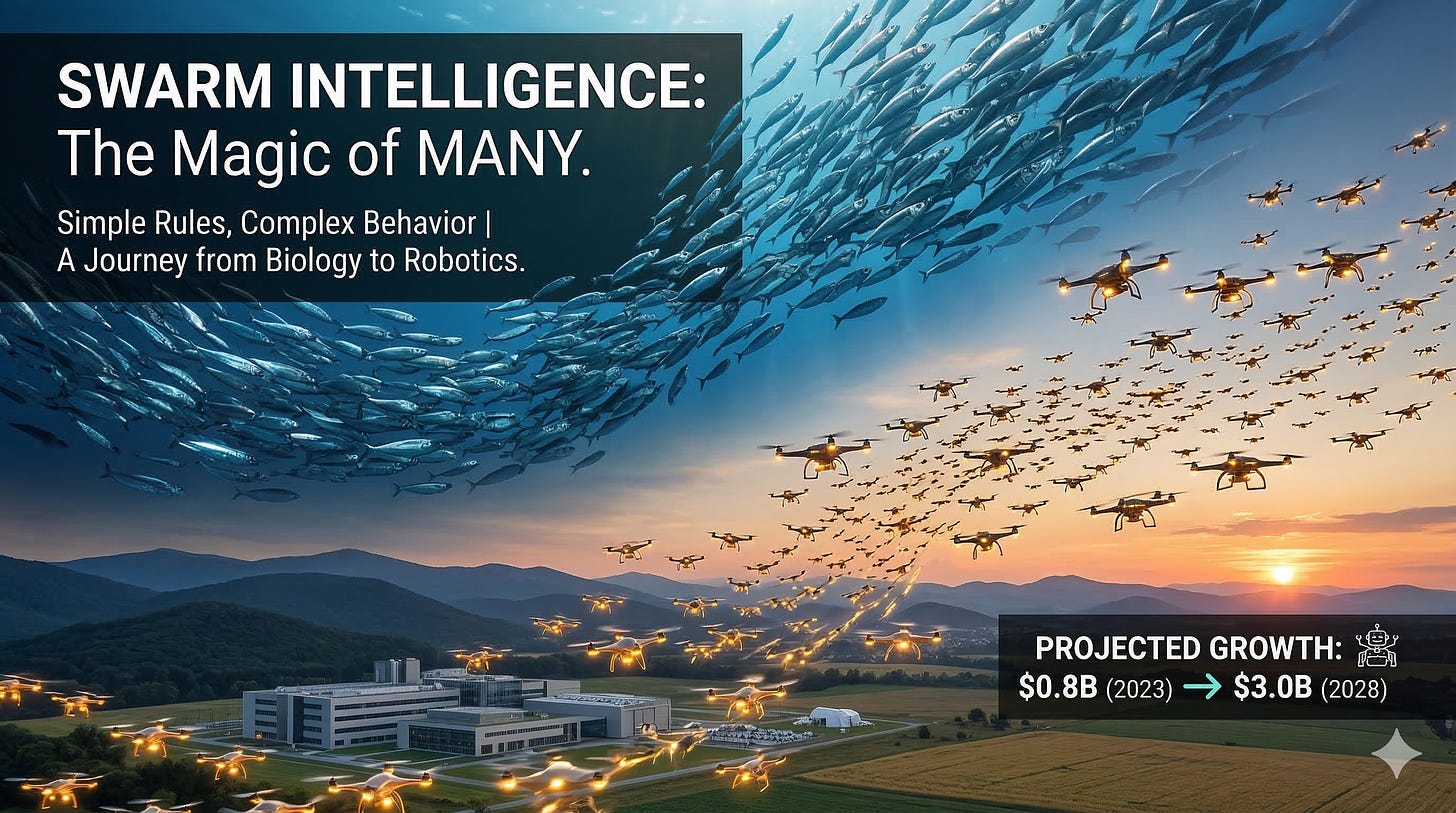

Imagine a shimmering cloud of thousands of fish, moving as one single entity. They twist and turn, avoiding obstacles and splitting apart to flow around a predator, only to reform perfectly on the other side.

It looks like a complex dance choreographed by a leader.

But there is no leader. There is no blueprint. There is no communication about the grand plan.

Each fish follows incredibly simple rules while paying attention to just one or two neighbors at a time—not necessarily even the closest ones.Stay close to your neighbors. Don’t bump into them. Point in the same general direction as they do.

That’s it.

From these three simple, local interactions, an incredibly complex and intelligent global behavior emerges. The swarm becomes more than the sum of its parts. It becomes a single, adaptive, super-organism. This is the magic of emergence, and it’s what inspired a generation of roboticists, including me.

My Journey into the Swarm: When High-Dimensional Thinking Hit a Wall

About ten years ago, I was working on swarm intelligence. We were obsessed with a fundamental question: how do we control this magic?

We took what felt like a sophisticated approach. We assumed the emergent behavior of the swarm existed in a high-dimensional space, and our job was to find the underlying structure.

Think of it like trying to describe a symphony using only a volume knob. A symphony has thousands of notes played by dozens of instruments at different moments—that’s the high-dimensional space. We hoped to find just a few simple controls—like turning up the violins or down the brass—that could capture the essence of the entire performance and direct it.

We tried to collapse this complexity down to a low-dimensional space where we could find simple controls to command the swarm. We were looking for the main melody in a wall of sound, believing that if we could isolate those essential elements, we could conduct the entire swarm with just a few simple commands.

We built a simulator of a fish swarm, believing we could map its group mind and steer it like a ship.

Obviously, it did not work.

We were trying to find a master control panel for a system that doesn’t have one. We were looking for a smooth, continuous dial to control something that behaves more like a network of thousands of on-off switches. The real problem we kept bumping into was the one that drives the entire field: we haven’t quite figured out what the constituent individual low-level behaviors need to be to get the desired emergent behavior.

New Frontiers: From Global Control to Local Interactions

The failure of that old approach taught us something crucial. We were looking at the problem from the wrong end.

The real problem ran deeper than we initially understood.

“Curse of non-stationarity” - the swarm’s effective rules would change dramatically depending on context. A school of fish might switch from polarized swimming to a swirling vortex in seconds, completely changing their underlying dynamics. Our low-dimensional control surface was like a map that kept changing its terrain while we were trying to read it.

Sparsity problem - Recent research has confirmed that each fish isn’t processing information from the entire school. In fact, they use what’s called a “hard attention mechanism,” selectively focusing on just one or two neighbors to make their decisions. This extreme sparsity meant our approach of averaging the entire swarm’s state was like trying to understand a conversation by listening to everyone in a crowded stadium at once.

Instead of trying to control the whole swarm from above, the field has shifted to understanding the swarm from the inside out. We stopped trying to be architects drawing a blueprint and started trying to be gardeners, cultivating the right conditions for intelligence to grow.

This changes everything for robotics. Instead of designing a central brain, we now focus on designing the perfect local rules. We use evolutionary algorithms to let hundreds of robot swarms in simulation “evolve” their own simple rules to solve a task, like finding a target or exploring a building. The most successful rule sets are then programmed into our physical robots.

But here’s what makes this decade transformative: swarm robotics is no longer just an academic curiosity. The swarm robotics industry is projected to grow from $0.8 billion in 2023 to $3.0 billion by 2028—a 30.9% annual growth rate. In October 2024, France’s Thales Group demonstrated their COHESION system—a drone swarm with unprecedented autonomy that could coordinate tactics, share target data, and adapt to mission phases with minimal human intervention.

Around the same time, the Pentagon launched the Replicator initiative, aiming to deploy thousands of low-cost autonomous drones by 2025. In early 2025, Saab and the Swedish Armed Forces announced a program enabling soldiers to control up to 100 drones simultaneously using swarm AI, slated for testing in Arctic conditions.

The applications extend far beyond defense. Mining operations are exploring swarm robots based on ant, firefly, and honeybee behaviors for ore detection and extraction. Agricultural drones work as swarms to monitor crops and target weeds with precision.

We are learning to build swarms not by commanding them, but by giving them the right simple instincts and letting them figure out the rest.

What other complex problems could we solve not by adding more intelligence, but by distributing it more cleverly?

If you found this glimpse into the future of robotics fascinating, make sure you subscribe. We explore these ideas together every week.